Deploying AI in Enterprise has become a pivotal focus for organizations aiming to leverage artificial intelligence’s transformative potential. As businesses increasingly invest in AI deployment strategies, the challenges of integrating these advanced technologies into existing systems can often be underestimated. Despite the understanding of methods like fine-tuning models and retrieval augmented generation (RAG), the real test lies in effectively applying these techniques to meet specific needs. Aleph Alpha’s innovative approach to sovereign AI highlights the importance of utilizing homegrown datasets and fostering institutional knowledge to ensure successful deployments. With a keen focus on optimizing AI training architecture, organizations can navigate these complexities and unlock the full value of AI in their operations.

The implementation of artificial intelligence within organizations represents a crucial step towards modernizing enterprises and enhancing operational efficiency. Often referred to as AI integration or the utilization of intelligent systems, this process encompasses various methodologies, including the customization of AI models and the incorporation of advanced retrieval techniques. The concept of sovereign AI, which emphasizes the use of domestic data for training models, is gaining traction as companies seek to develop tailored solutions that align with their unique requirements. By embracing innovative AI training structures and strategies, businesses can significantly improve their capacity to deploy sophisticated AI applications that drive value and foster growth.

Understanding AI Deployment Strategies in Enterprises

Deploying AI in the enterprise landscape requires a nuanced understanding of various deployment strategies. Organizations have traditionally relied on methods such as fine-tuning to adapt large language models (LLMs) for specific tasks. However, simply fine-tuning a model often falls short of achieving the desired outcomes. Businesses must recognize that successful AI deployment goes beyond just tweaking parameters; it involves a comprehensive strategy that integrates the model into existing workflows and ensures it addresses the unique challenges faced by the organization.

Moreover, retrieval augmented generation (RAG) has emerged as a compelling alternative in AI deployment strategies. By treating the LLM as a librarian that retrieves information from an external database, RAG allows businesses to keep their models updated without the need for constant retraining. This approach is particularly beneficial in environments where information evolves rapidly, as it maintains accuracy and relevancy. However, it is crucial for enterprises to document their institutional knowledge effectively to maximize the benefits of RAG, highlighting the importance of structured data management in AI deployment.

The Role of Fine-Tuning Models in Effective AI Deployment

Fine-tuning models plays a pivotal role in optimizing AI for specific tasks within an enterprise. While it is a powerful tool for adapting pre-trained models, it is not a panacea for all deployment challenges. Organizations must approach fine-tuning with a clear understanding of its limitations. As noted by industry experts, fine-tuning can modify a model’s existing behavior but may not equip it with new information necessary for complex problem-solving. Businesses should consider fine-tuning as part of a broader AI strategy that may include other methods like RAG or even the development of sovereign AI frameworks.

Additionally, the effectiveness of fine-tuning is contingent upon the quality and diversity of the training data utilized. Enterprises must ensure that the data used for fine-tuning is representative of the tasks at hand, including out-of-distribution scenarios. This is particularly crucial for organizations operating in multilingual environments or specialized sectors where the model must understand technical terminology. By combining fine-tuning with robust data strategies, companies can enhance their AI systems’ performance and ensure that they meet the specific needs of their operations.

Embracing RAG in AI for Enhanced Performance

Retrieval augmented generation (RAG) is revolutionizing how organizations approach AI deployment. By leveraging RAG, enterprises can enhance the performance of their existing AI models without undergoing the exhaustive retraining typically required. RAG operates by retrieving relevant data from an external source, allowing the model to produce more accurate and contextually relevant outputs. This method not only streamlines the update process but also enables organizations to bridge the gaps in knowledge that may arise in rapidly evolving fields.

However, for RAG to be truly effective, enterprises must ensure that their data is well-structured and easily accessible. The success of RAG hinges on the quality of the external archives from which the model retrieves information. Companies must invest in data management practices that facilitate efficient retrieval processes, ensuring that the information fed into the AI system is accurate and up-to-date. By doing so, businesses can maximize the potential of RAG, leading to improved decision-making and operational efficiency.

The Significance of Sovereign AI for Enterprises

Sovereign AI is gaining traction as organizations seek to develop AI models tailored to their unique datasets and operational contexts. This concept emphasizes the importance of training AI systems using data sourced from within a nation or organization, which can enhance the relevance and applicability of the model’s outputs. Sovereign AI is particularly beneficial for governments and enterprises looking to maintain control over their data and ensure compliance with local regulations, thus fostering trust and accountability.

Incorporating sovereign AI strategies requires a foundational shift in how organizations approach AI development. It necessitates investments in local data infrastructure, collaboration with domestic data providers, and the establishment of robust protocols for data collection and utilization. By building sovereign AI frameworks, enterprises can not only enhance their operational effectiveness but also contribute to the development of a more resilient and self-sufficient AI ecosystem that aligns with national interests.

Innovative AI Training Architecture for Future Deployment

Advancements in AI training architecture, such as Aleph Alpha’s tokenizer-free (T-Free) training, are paving the way for more efficient AI deployment strategies. This innovative approach simplifies the fine-tuning process, allowing models to better understand and process out-of-distribution data. By reducing the reliance on traditional tokenization methods, organizations can achieve significant cost savings and reduce their carbon footprint during the training phase, making AI deployment more sustainable.

Furthermore, T-Free training architecture addresses a critical challenge faced by many enterprises: the ability to train AI models across multiple languages and dialects. This capability is increasingly important in today’s globalized economy, where businesses operate in diverse linguistic environments. By leveraging such advanced training architectures, organizations can enhance their AI models’ performance and scalability, ensuring they are well-equipped to handle complex, multilingual tasks effectively.

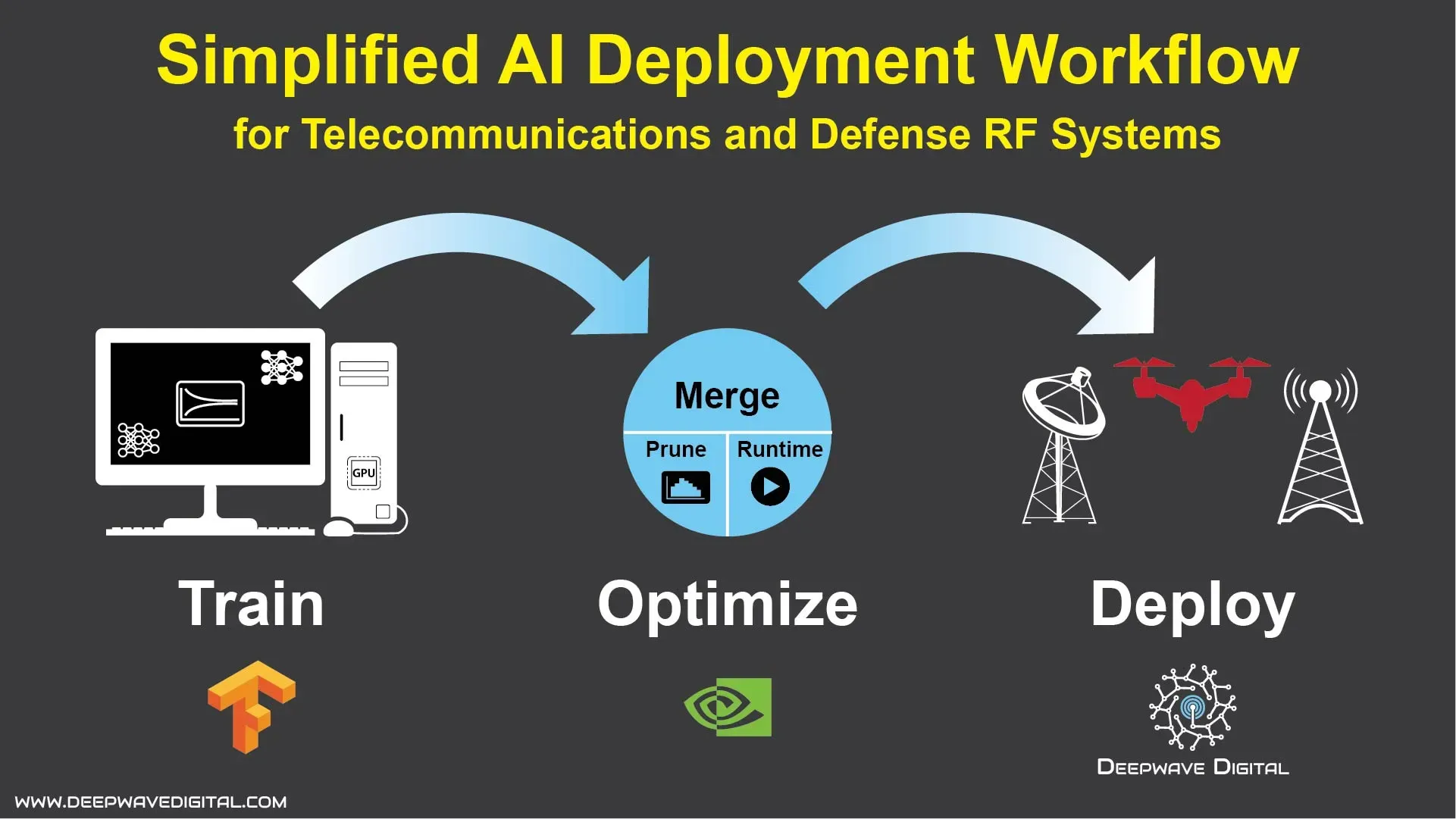

Challenges in AI Deployment: Hardware Considerations

When deploying sovereign AI, hardware considerations are paramount. Organizations must often navigate the complexities of using domestically produced hardware, which can introduce additional challenges in terms of compatibility, performance, and cost. Collaborations with hardware partners, such as AMD and Cerebras, are essential to ensure that enterprises have access to the acceleration hardware necessary for efficient AI model training.

The choice of hardware can significantly affect the success of AI deployment. Organizations need to assess their hardware capabilities critically and make informed decisions that align with their specific AI strategies. By investing in the right hardware infrastructure, companies can enhance their AI training and deployment processes, ultimately leading to improved performance and outcomes in their AI initiatives.

Looking Ahead: The Future of AI Applications

As the demand for AI applications continues to evolve, organizations must adapt to the increasing complexity of AI systems. The shift from simple models, such as chatbots, to more sophisticated agentic AI systems is transforming the landscape of business operations. This evolution necessitates a reevaluation of existing AI strategies and the adoption of innovative approaches that can handle complex problem-solving tasks.

Looking ahead, companies must invest in research and development to stay at the forefront of AI advancements. This includes exploring new methodologies, frameworks, and technologies that can enhance AI capabilities. By fostering a culture of innovation and continuous improvement, enterprises can position themselves to capitalize on emerging opportunities in the AI space, ensuring that they remain competitive in an increasingly technology-driven world.

Bridging Knowledge Gaps with AI Solutions

One of the significant challenges in AI deployment is bridging knowledge gaps that can lead to misconceptions and erroneous conclusions. Aleph Alpha’s Pharia Catch framework exemplifies how organizations can address these gaps by allowing for human consultation when conflicting data arises. This feedback mechanism not only enriches the AI’s knowledge base but also enhances its decision-making capabilities, ensuring that outputs are grounded in accurate and reliable information.

Effective knowledge management is critical in fostering trust and reliability in AI systems. Organizations must develop robust protocols for capturing and integrating human insights into their AI models. By implementing such solutions, businesses can enhance the overall efficacy of their AI deployments, leading to better performance and more informed decision-making processes that align with their strategic objectives.

Partnerships: Key to Successful AI Implementation

Strategic partnerships play a vital role in the successful implementation of AI technologies. Collaborations with established firms like PwC and Deloitte enable organizations to leverage external expertise and resources, facilitating the adoption of advanced AI solutions. These partnerships can provide essential support in navigating the complexities of AI deployment, from strategy formulation to execution.

Moreover, partnerships can enhance knowledge sharing and innovation within the AI ecosystem. By collaborating with tech leaders and research institutions, organizations can gain access to cutting-edge tools, frameworks, and methodologies that can accelerate their AI initiatives. As the AI landscape continues to evolve, fostering strong partnerships will be crucial for enterprises looking to stay ahead of the curve and achieve their AI deployment goals.

Frequently Asked Questions

What are the key AI deployment strategies for enterprises?

AI deployment strategies for enterprises include fine-tuning models, utilizing retrieval augmented generation (RAG), and developing sovereign AI systems. These strategies help organizations effectively integrate AI into applications, ensuring they meet specific business needs and maintain compliance with data governance.

How does fine-tuning models impact AI deployment in enterprise environments?

Fine-tuning models can enhance their performance for specific tasks, but it is not a one-size-fits-all solution. Effective AI deployment in enterprises often requires a combination of fine-tuning and other methods, such as RAG, to ensure that models can access updated and accurate information without retraining.

What is retrieval augmented generation (RAG) and how does it facilitate AI deployment?

Retrieval augmented generation (RAG) allows AI models to function like librarians by retrieving information from external archives. This approach simplifies AI deployment in enterprises by enabling models to access current data without the need for extensive retraining, thus enhancing accuracy and relevance.

What challenges do enterprises face when deploying sovereign AI?

Enterprises face several challenges when deploying sovereign AI, including the need for domestic data governance, hardware compatibility, and ensuring that institutional knowledge is well-documented. These factors are crucial for the successful integration of AI systems that are tailored to national datasets.

How does the AI training architecture influence deployment in enterprises?

The AI training architecture, such as Aleph Alpha’s tokenizer-free (T-Free) system, significantly influences deployment by reducing training costs and carbon emissions. This innovative architecture allows enterprises to effectively fine-tune models for diverse datasets, facilitating smoother and more efficient AI deployment.

What role does institutional knowledge play in AI deployment strategies?

Institutional knowledge is vital in AI deployment strategies, especially when using methods like RAG. Well-documented knowledge ensures that AI models can retrieve accurate information, leading to better decision-making and reducing the risk of errors during deployment.

How can enterprises ensure effective AI deployment when using out-of-distribution data?

To ensure effective AI deployment with out-of-distribution data, enterprises should combine fine-tuning with retrieval augmented generation (RAG). This dual approach allows models to adapt to new data types while accessing a broader information base to improve their accuracy and reliability.

What partnerships can assist enterprises in deploying AI technologies?

Partnerships with firms like PwC and Deloitte can significantly assist enterprises in deploying AI technologies. These collaborations provide expertise in implementing advanced AI strategies, ensuring that organizations can effectively leverage AI capabilities for their specific needs.

What is the significance of hardware in AI deployment for enterprises?

Hardware plays a crucial role in AI deployment for enterprises, particularly when implementing sovereign AI systems. Organizations must ensure compatibility with domestically produced hardware and utilize acceleration technologies from partners like AMD and Cerebras to optimize AI training and deployment processes.

How is the landscape of AI deployment evolving in enterprises?

The landscape of AI deployment in enterprises is evolving towards more sophisticated applications, transitioning from simple chatbots to complex agentic AI systems. This shift reflects the growing demand for AI solutions that can tackle intricate problem-solving tasks, driving innovation and efficiency across industries.

| Key Point | Details |

|---|---|

| Training AI Challenges | Despite significant investments, integrating AI models into applications remains difficult. Fine-tuning is often misunderstood as a simple solution. |

| Retrieval Augmented Generation (RAG) | RAG allows LLMs to retrieve information from external sources, providing updates without retraining. However, it relies on well-documented institutional knowledge. |

| Sovereign AI | Aleph Alpha focuses on training models on domestic datasets, supporting governments and companies in developing tailored AI solutions. |

| Innovative Solutions | Aleph Alpha’s ‘T-Free’ architecture simplifies fine-tuning and reduces training costs significantly, emphasizing lower carbon footprints. |

| Partnerships | Collaboration with firms like PwC and Deloitte helps implement Aleph Alpha’s technologies effectively. |

| Hardware Challenges | Deployment is complicated by the need for domestic hardware, with Aleph Alpha partnering with companies like AMD and Cerebras. |

| Future Outlook | The industry is shifting towards more complex AI systems, moving beyond basic applications like chatbots. |

Summary

Deploying AI in Enterprise is a challenging yet essential endeavor for organizations looking to harness the full potential of artificial intelligence. As highlighted in the insights from Aleph Alpha’s Jonas Andrulis, the journey from training AI models to effectively implementing them in real-world applications is fraught with complexities. Techniques like fine-tuning and Retrieval Augmented Generation (RAG) offer pathways to enhance AI capabilities, but successful deployment requires careful consideration of institutional knowledge and technological frameworks. Companies must not only invest in the right hardware and partnerships but also embrace innovative strategies that cater to their unique needs. As the demand for more sophisticated AI applications grows, enterprises must prepare to evolve alongside these advancements to maximize their benefits.